Abstract

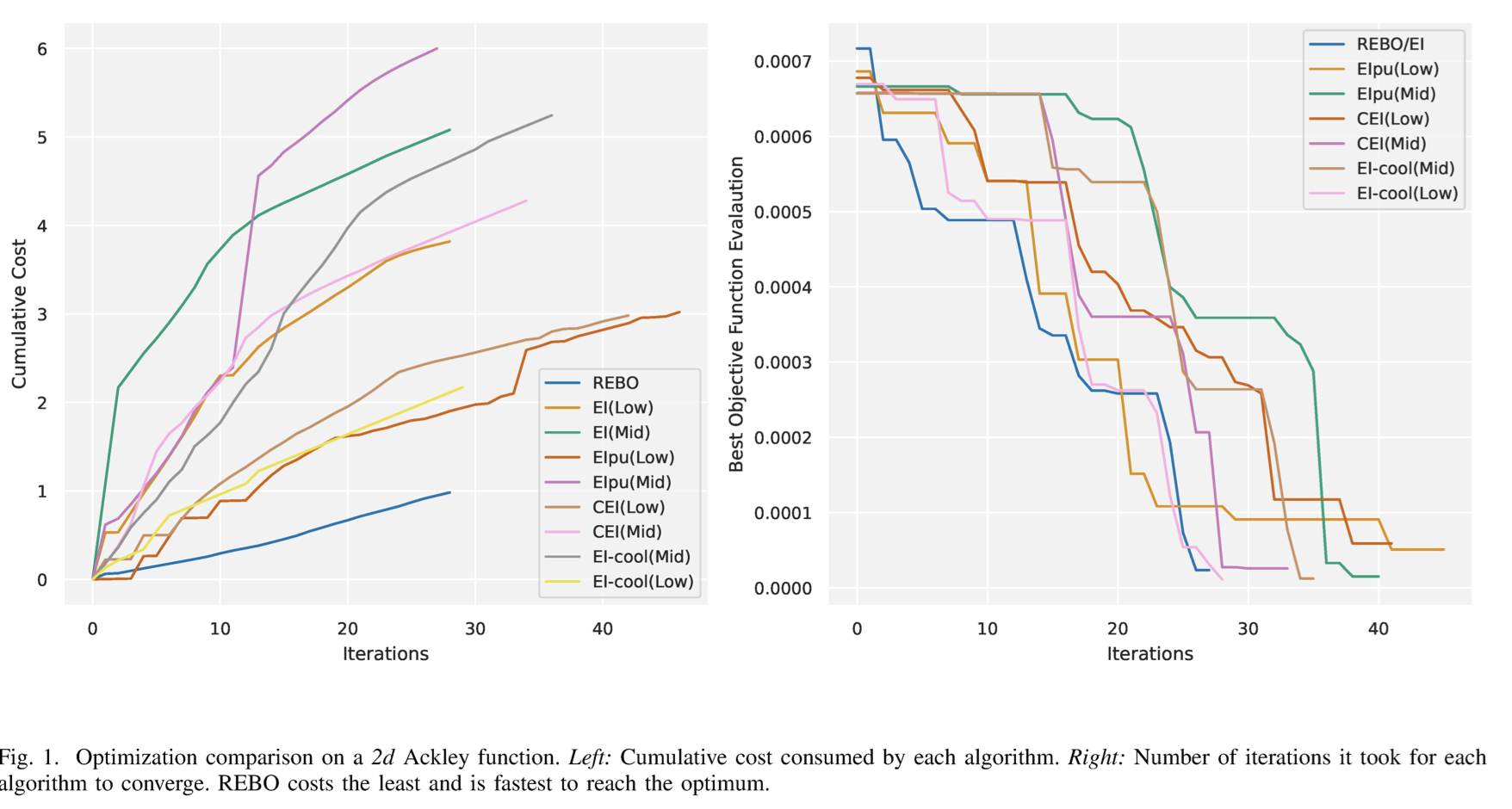

We propose a resource-efficient Bayesian Optimization (BO) formulation that can provide the same convergence guarantees as traditional BO, while ensuring that the opti-mization makes efficient use of the available cloud or high-performance computing (HPC) resources. The paper is motivated by the fact that for many optimization problems that lend themselves well to BO, like hyper-parameter optimization for training large machine learning models, the single function evaluation cost depends on the model parameters as well as system parameters. The proposed Resource Efficient Bayesian Optimization (REBO) algorithm is a novel formulation that exploits this dependence and provides significant cost benefits for users who want to deploy BO on cloud and HPC resources that are characterized by availability of compute resources with varying costs and expected performance benefits. We demonstrate the effectiveness of REBO, in terms of convergence and resource-efficiency, on a variety of machine learning hyper-parameter optimization applications.

BibTex

@INPROCEEDINGS{10643903,

author={Juneja, Namit and Chandola, Varun and Zola, Jaroslaw and Wodo, Olga and Desai, Parth},

booktitle={2024 IEEE 17th International Conference on Cloud Computing (CLOUD)},

title={Resource Efficient Bayesian Optimization},

year={2024},

volume={},

number={},

pages={12-19},

keywords={Training;Cloud computing;Costs;Computational modeling;Optimization methods;Machine learning;Bayes methods;Bayesian optimization;Resource-efficient op-timization;Expected Improvement;Gaussian processes;active learning},

doi={10.1109/CLOUD62652.2024.00012}}